💪 Core challenge

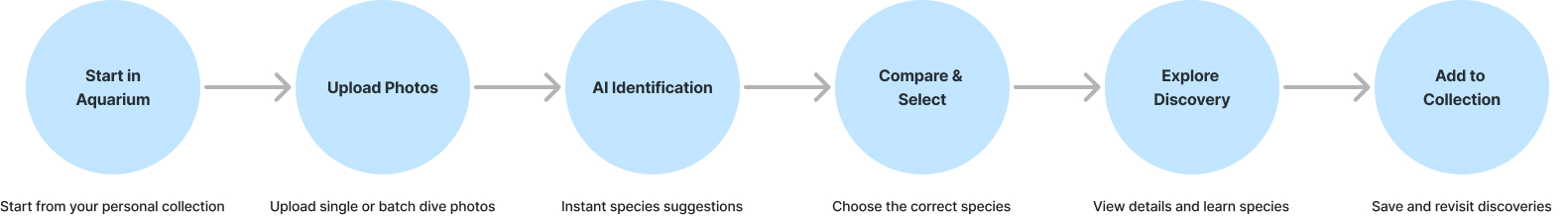

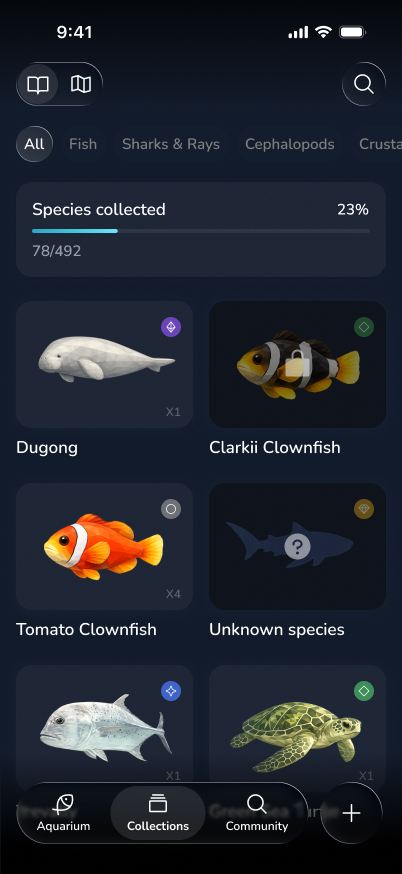

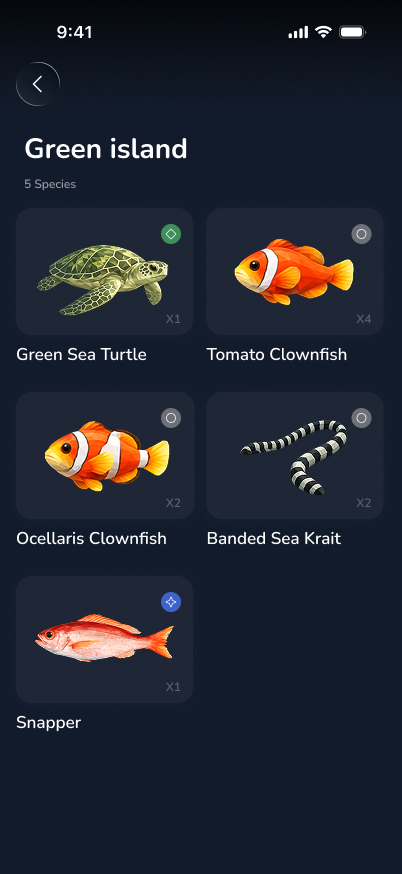

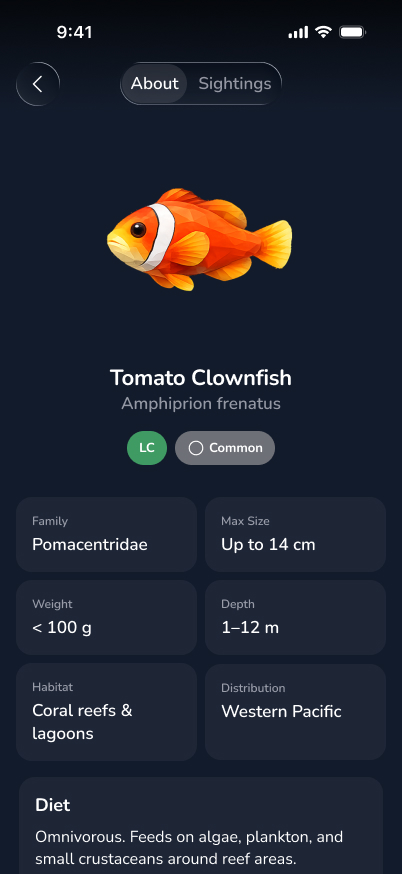

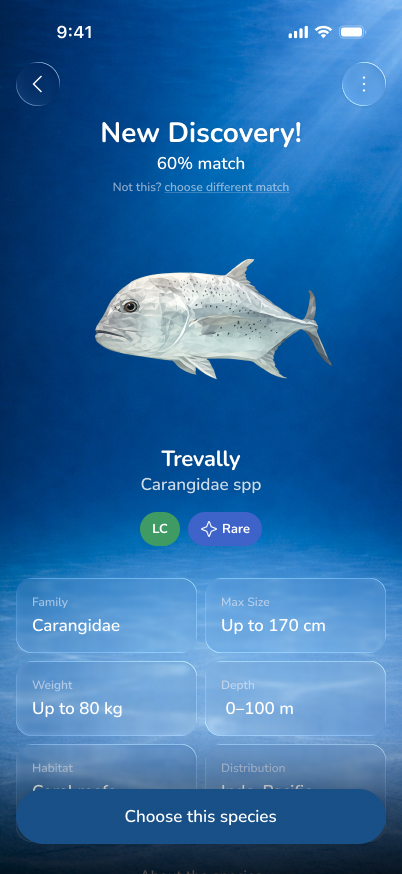

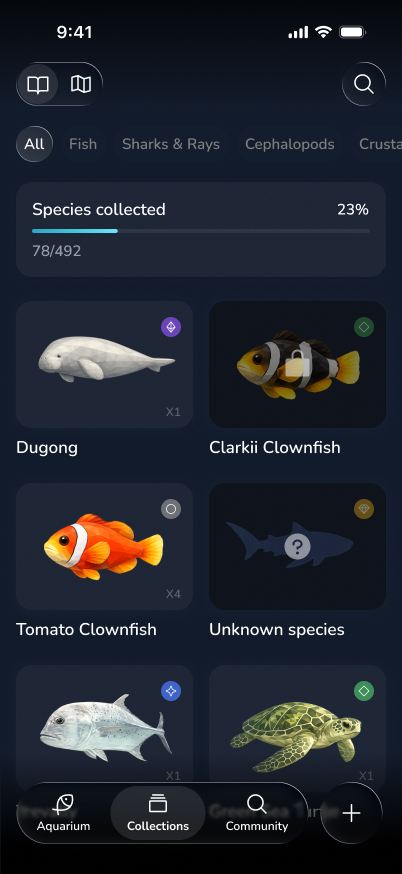

AquaGo is an AI-powered app that helps divers quickly identify marine species and turn each discovery into a lasting collection.

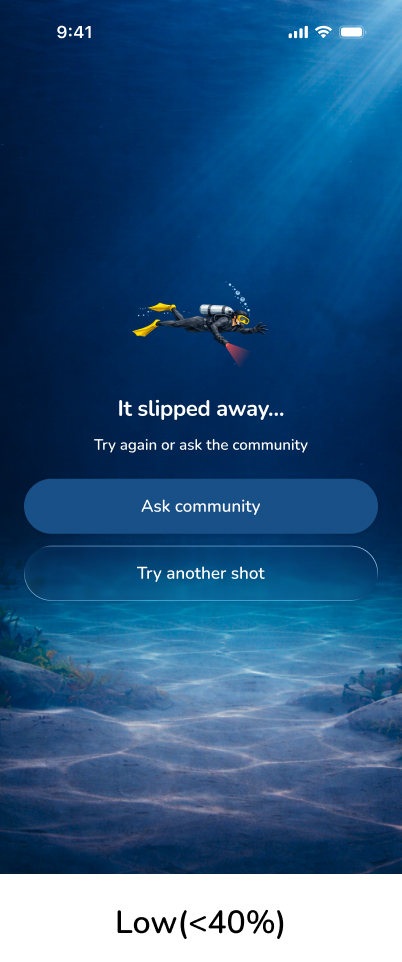

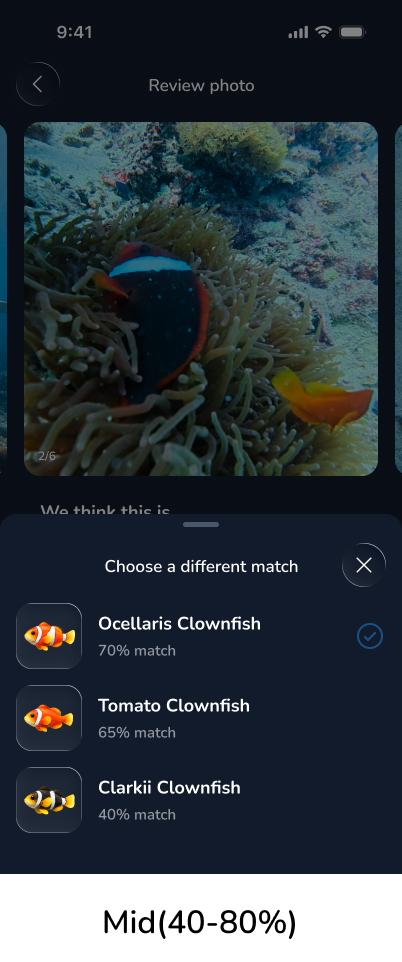

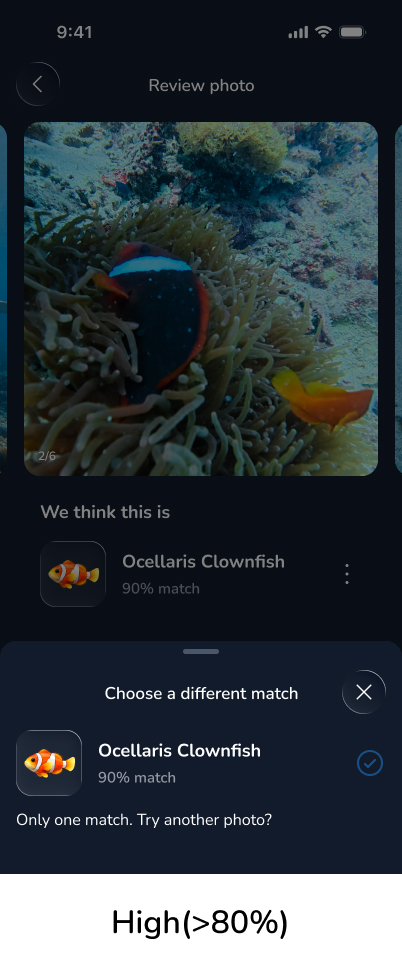

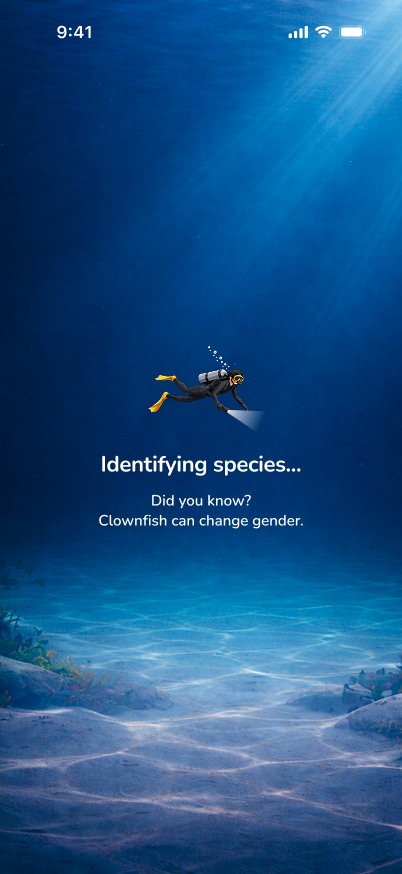

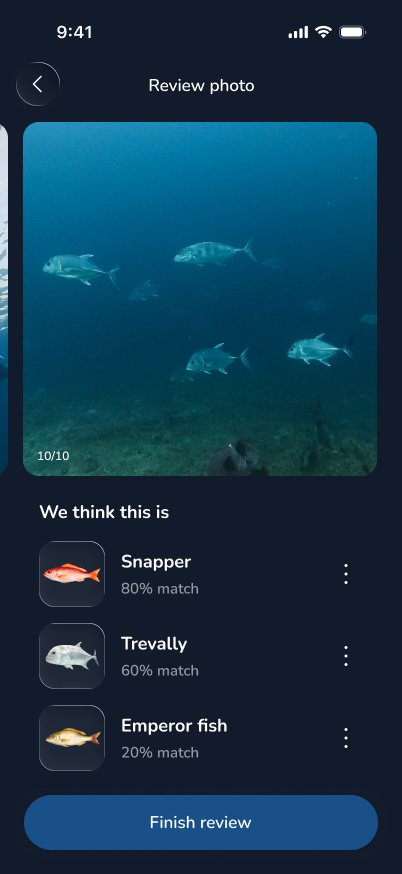

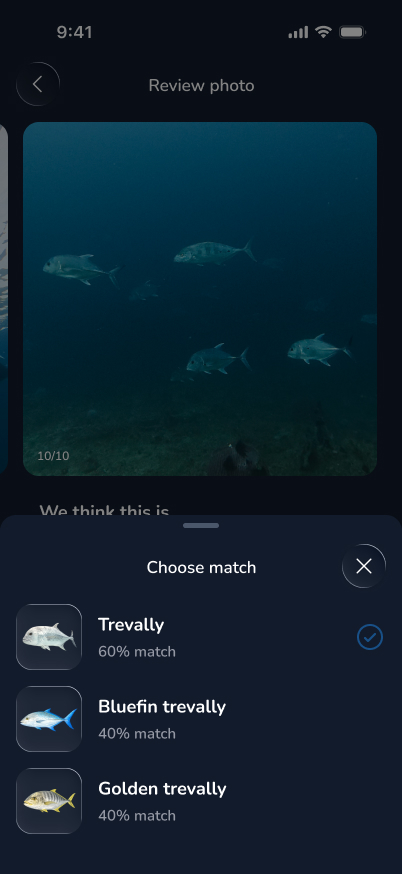

I focused on making identification fast and trustworthy in a low-quality image environment, prioritizing speed in the first interaction while building trust over time.

This shifts identification from a one-time action into a repeatable behavior loop.

How do you design AI that feels trustworthy when the environment makes perfect accuracy impossible?

Recreational divers as the primary audience, with ocean enthusiasts as an extended user group.

After a dive, identifying marine species is fragmented, time-consuming, and often unreliable. Divers lose confidence in results and excitement fades before knowledge is retained — breaking the loop between experience and learning.

Divers experience a high-intent moment immediately after a dive, but the journey breaks due to fragmented tools and low confidence — turning curiosity into friction instead of continuation.

These insights pointed to a product that needed to solve three things in sequence: reduce friction, build habit, and enable identity.

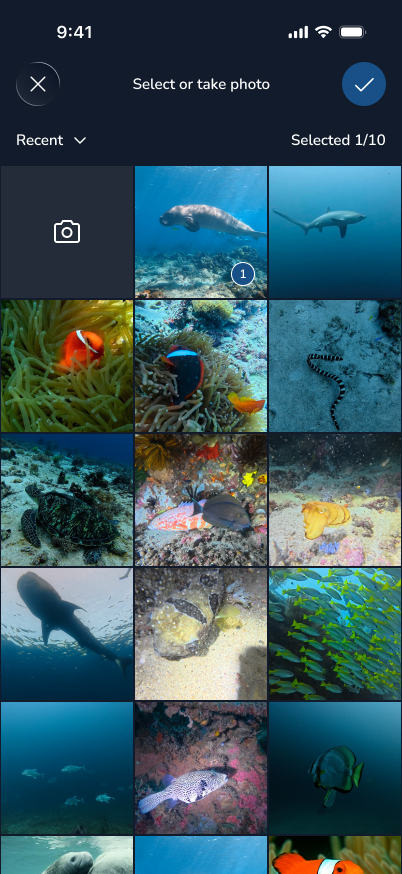

The experience is designed to be fast, intuitive, and centered around a high-intent moment immediately after a dive.